In an ideal world, organisations would approach customer satisfaction measurement by establishing what is important to their customers before they decide what they are going to ask. They would use surveys to identify what is driving satisfaction, what satisfaction means to different groups of customers and what they can and cannot do to influence satisfaction levels. They would evaluate, explore and learn, and then take action where it counts. In doing so, they would seek to make more customers satisfied and make customers more satisfied. Satisfaction measurement would feed into their service improvement cycle and be an ongoing and vital part of their business intelligence. They would use a variety of measurement methods and measure again when the time was right.

In another world, satisfaction measurement has been characterised by asking what others want you to ask and asking a whole lot of other things that you should already know. It has been based on a faulty rating scale and an unhealthy preoccupation with a standardised methodology and statistical reliability. Organisations worry if satisfaction has gone down and are pleased if it has gone up. External reporting is seemingly more important than action to improve customer satisfaction and so you diarise the next time you need to do another survey. Of course, if you are not required to do a survey other priorities may seem more pressing.

Many small housing associations are undoubtedly making valuable use of satisfaction measurement. It has certainly become apparent to us that there is a growing enthusiasm and will among small associations to pursue more sophisticated approaches to understanding customer satisfaction and to take purposeful action in response to what they learn. We are working with some of these organisations and our approach to satisfaction research has been designed specifically with smaller associations in mind.

The methods we use provide a picture not just of satisfaction levels but of the underlying themes and issues which are driving overall satisfaction. As well as offering you flexibility in both what you ask and how residents’ views are obtained, our questions are structured so as to identify satisfaction at specific points on the customer’s service experience journey.

- Findings can be used to plan and prioritise

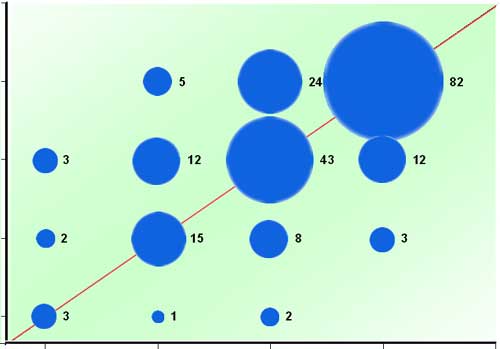

For example, in a recent survey we found reasonably high levels of satisfaction with both maintenance and customer service. However, we also identified a sharp fall-off in satisfaction between the point of first contact and the outcome of the experience. In other words, residents were saying that they were pretty satisfied at the beginning of the process but far less satisfied by the end. In the case of maintenance, when we segmented by in-house service / external contractor the fall-off became even more apparent. There was clearly a service issue that the headline figures had not revealed.

Because our approach is built around the use of secure and robust databases into which we import resident demographics, we can at the touch of a button analyse satisfaction by any population characteristic (e.g. age, ethnicity, location etc.) supplied. Not only does this mean you can avoid asking residents to supply information you already hold, it enables you to establish whether you need to target specific groups in your efforts to improve satisfaction levels. For example, in another recent survey we were able to identify that anti-social behaviour was an issue in one or two specific locations rather than a concern of all residents. Combined with our use of free text comments, we were able to build a rich picture of local experiences.

Such demographic analysis allows you to engage in some creative thinking about expectations and what you can and cannot influence. It has, for example, become something of a received wisdom that older residents tend to be more satisfied than younger ones. So do you just accept this or challenge yourself to address a particular group’s expectations? Is there something about the structure of younger people’s lives that your services could better address?

Oh, and be prepared to be surprised. When we recently carried out an analysis by age, we found that the very youngest residents were by far the most satisfied! In another survey, we found a significant and surprising fall in residents’ satisfaction with their homes in the context of a very substantial improvement programme. Satisfaction measurement requires a spirit of enquiry as well as the comfort of statistical reliability. It can involve you in some pretty tough calls. What, for example, do you do in response to poor satisfaction with a particular service if those respondents are extremely satisfied overall?

Satisfaction measurement is also a really useful vehicle for changing the ways in which you communicate with residents and for encouraging more residents to get involved. We will explore that subject in a future post.

Comments are closed.